Ad creative testing: clone, test, kill, scale

You don't need better ads. You need more ads, tested faster.

The e-commerce seller who ran 30 ad creative tests last month found two winners. The one who ran 3 found nothing and blamed the product. Same niche. Same audience targeting. Different testing velocity.

Ad creative testing — systematically producing, launching, and evaluating multiple ad variations to find what converts — isn't about finding one perfect ad. It's about producing enough variations to give the math a chance, reading the data quickly, and iterating before the window closes. The brands winning at ad testing right now aren't more creative — they're faster. Here's the system.

Why most ad creative testing fails

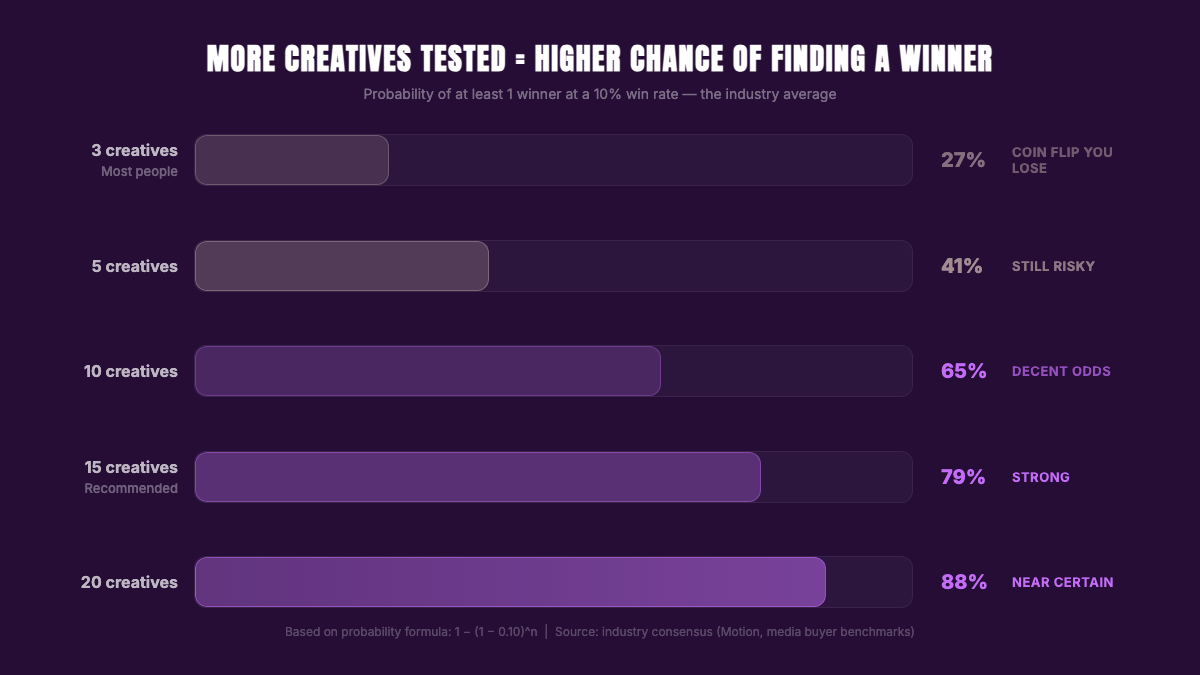

Roughly 10-15% of ad creatives become winners. The rest break even or lose money. That's not a guess — it's consistent across media buyers, creative testing platforms, and Meta's own reporting.

If 1 in 10 creatives becomes a winner, testing 3 gives you a 27% chance of finding one that works. Nearly 3 out of 4 product tests fail not because the product is bad, but because you didn't test enough angles.

Every media buyer knows this. "Test more creatives" is standard advice. But nobody addresses the bottleneck: producing creative variations takes time. Brief a designer, wait a couple hours for the deliverable, request a revision, wait again. Multiply that by 10 variations and you've spent a full day just on creative production — time you could have spent analyzing data and iterating.

The constraint isn't money. It's iteration speed. The faster you can produce and test creative variations, the faster you learn what works.

The clone-and-test method

Most ad creative testing starts from scratch. Open a blank canvas, pick a layout, write copy, export, test, learn nothing, start over.

Clone-and-test flips that. You start from an ad that already works — a proven layout with validated visual hierarchy — and rebuild it with your product. The design question is already answered. Now you're testing whether your product resonates in that proven frame.

Four steps. Each one removes a variable that slows down most creative testing workflows.

Step 1: Find ads that already convert

Winning ads are public. The platforms show you what's running and for how long.

Free sources:

- Meta Ad Library — search any brand or keyword. Filter by active ads. An ad running for 30+ days is almost certainly profitable.

- TikTok Creative Center — top ads by industry, with performance metrics.

- Your own feed. Screenshot ads that stop your scroll.

Paid sources:

- AdSpy ($149/mo) — largest ad database. Filter by engagement, run time, niche.

- Minea ($49/mo) — built for product research, includes ad spy tools.

What to look for: high engagement, long run time, multiple ad formats running simultaneously. If an advertiser is spending money on an ad for weeks, it's converting. Pay attention to recurring layout patterns — split-screen product shots, lifestyle backgrounds with product overlays, minimal product-on-color, or before-and-after comparisons. Browse proven layouts organized by category to find patterns worth testing.

Step 2: Clone the layout, swap your product

Take the winning ad's layout — its visual structure, composition, and hierarchy — and rebuild it with your product. Not the copy. Not the branding. The structure that made it work.

AdDogs does this in seconds. Upload your product photo, use the winning ad as a reference, and the AI rebuilds the composition with your product swapped in. Brand colors get extracted from your logo and applied automatically. Free and Basic include 3 dimensions (square 1:1, portrait 9:16, landscape 16:9); Pro and Ultimate unlock all 14.

Produce 5-10 variations per product using different reference ads. Each one takes seconds. You can have 10 ready to test in under two minutes — same-day, no design skills required.

You'll still need to write your own ad copy — headlines and primary text. But the visual creative, which is what stops the scroll, is handled. And that's the variable most people struggle with.

Here's why this matters for ad creative testing: you're isolating the product variable. The layout is proven. The composition is tested. If the ad doesn't convert, it's the product or the offer — not the design. That diagnostic clarity is impossible when you're designing from scratch.

Step 3: Launch your test structure

Campaign structure for testing:

- Campaign type: ABO (ad set budget optimization). CBO is for scaling, not testing. You want equal budget across all creatives so the data is comparable.

- Ad sets: 1 creative per ad set. Don't stack multiple creatives in one ad set — Meta will allocate budget unevenly and you won't get clean data.

- Budget: $5-10/day per ad set. Lower than $5 and you won't exit Meta's learning phase fast enough.

- Targeting: Broad or 1-2 interest stacks. Don't over-target during testing — you want the algorithm to find your buyers.

- Duration: 48-72 hours minimum. Don't touch anything before 48 hours. Changing variables mid-flight resets the learning phase.

This ABO structure is the foundation of effective a/b testing ad creatives at scale. It works for Meta. TikTok's algorithm rewards creative variety similarly — same logic applies, though TikTok campaigns tend to favor broader targeting and shorter testing windows (24-48 hours) given its faster learning cycles.

Step 4: Read the data at 48 hours

At the 48-hour mark, check three metrics:

| Metric | Signal | Action |

|---|---|---|

| CTR (link) | >1.5% = strong, 1-1.5% = promising, <0.8% = weak | Low CTR = bad hook or wrong audience |

| CPC | Compare to your niche average | High CPC + high CTR = the audience clicks but they're expensive to reach |

| Add-to-cart rate | Any add-to-carts = the creative is generating real purchase interest | Zero ATCs after $15+ spend = the creative isn't converting interest into action |

Don't check ROAS yet. At $5-10/day for 48 hours, you have $10-20 of spend per creative. That's enough to judge engagement, not profitability. Testing spend is one line on your full Facebook ad cost stack — media, creative, tools, and platform fees all compound on top.

When to kill an ad creative

Killing ads is where most people either move too fast (cutting before enough data) or too slow (bleeding money on losers). Use these thresholds:

Kill immediately if:

- CTR below 0.5% after $15+ spend. The hook isn't working.

- Zero add-to-carts after $20+ spend. People see the ad but nobody's interested enough to act.

Kill at 72 hours if:

- CPA is more than 2x your break-even. Calculate break-even CPA: (product price - product cost - shipping) = max CPA. If the ad is running at double that after 72 hours, cut it.

- CPM has spiked 50%+ from day 1 to day 3. The audience is saturated.

Never kill based on:

- Less than $10 total spend. Not enough data to judge anything.

- One bad day in a three-day test. Look at the trend, not the snapshot.

- Gut feeling. Use the numbers.

Create your own product product ads

Create your adWhen to scale a winner

A winner meets three criteria over 3+ days:

- CPA below break-even. Consistently, not just on one lucky day.

- Stable or declining CPM. Rising CPM means the audience is fatiguing.

- Repeatable conversion pattern. At least 5-10 conversions, not 2 lucky purchases.

How to scale:

Duplicate the winning ad set at 1.5-2x the budget. Don't edit the original — editing resets the learning phase. Run both in parallel for 48 hours. If the duplicate performs, duplicate again.

While scaling, produce 5-10 fresh variations of the winning ad's layout immediately. Different product angles, different backgrounds, same proven structure. This is your fatigue insurance — and if you're producing variations in seconds, you can have them ready before the original starts to tire.

How many creatives does ad creative testing require

Here's the math that changes how you approach ad creative testing. Assume a 10% winner rate — meaning 1 in 10 creatives you test will become profitable. That's consistent with industry benchmarks.

| Creatives tested | Chance of at least 1 winner |

|---|---|

| 3 | 27% |

| 5 | 41% |

| 10 | 65% |

| 15 | 79% |

| 20 | 88% |

Testing 3 creatives is a coin flip you lose 3 out of 4 times. Testing 15 gives you nearly 4-in-5 odds.

The blocker for most people isn't budget — it's production time. Making 15 ad variations in Canva takes a full afternoon. A freelancer can turn around a static ad in a couple hours, but multiply that by 15 and factor in back-and-forth, and you're looking at a full day or more before you can launch.

When production drops to seconds per variation — using reference-based tools like AdDogs that clone proven layouts — testing 15 becomes a 3-minute task. Produce the full test batch, launch it the same morning, have data by tomorrow, iterate by afternoon. That speed compounds over weeks.

Creative fatigue: how to spot it and respond

Even winning ads die. For e-commerce audiences — especially smaller, targeted segments — creative fatigue sets in around 3-7 days.

The warning signs arrive in sequence:

- CTR drops first. The same people keep seeing the same ad. They stop clicking.

- CPM rises next. Meta raises your cost because engagement is falling.

- CPA increases last. Fewer clicks + higher costs = more expensive conversions.

When ad creative fatigue hits (usually day 3-5 as CTR dips), don't wait for CPA to blow up. Swap in fresh variations of the same winning layout. Not new concepts — new executions of the concept that already works.

Speed matters here more than anywhere else. If producing new variations takes a few hours of back-and-forth with a designer, your winner is bleeding performance while you wait. If it takes minutes, you can clone 5 new variations of the winning layout, upload them as fresh ad sets, and pause the fatigued original — all in the same session where you spotted the dip.

Static ads give you the fastest iteration loop here. Video requires shooting, editing, and rendering. Static variations can be produced and launched within minutes. For fighting facebook creative fatigue and keeping winners alive longer, static creative testing is the faster feedback cycle.

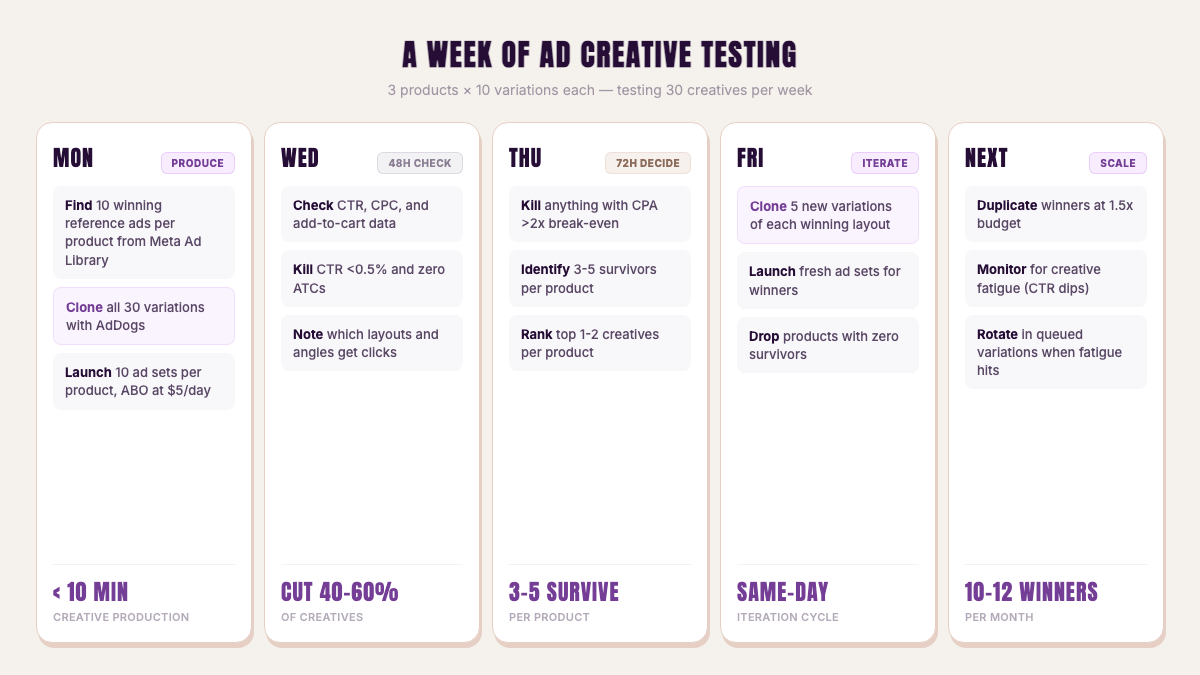

A week of ad creative testing

Here's what a structured testing week looks like for someone testing 3 products:

Monday: Find 10 winning reference ads per product from Meta Ad Library and TikTok Creative Center. Clone all 30 variations using AdDogs — under 10 minutes total. Launch 10 ad sets per product in ABO at $5/day.

Wednesday (48 hours in): Check CTR, CPC, and add-to-cart data. Kill anything with CTR <0.5% and zero ATCs. You'll likely cut 40-60% of creatives. Note which layouts and angles are getting clicks.

Thursday (72 hours in): Kill anything with CPA >2x break-even. You should have 3-5 surviving creatives per product. Identify your top 1-2.

Friday: For products with winners, clone 5 new variations of the winning layout with different product angles. Launch as fresh ad sets. For products with zero survivors, move on — clone-and-test gave you diagnostic clarity. The ad layouts were proven; the product didn't resonate.

The following week: Scale winners (duplicate at 1.5x budget). Monitor for creative fatigue. When CTR starts dipping on a winner, you already have fresh variations queued from Friday's production run.

Over a month, this cycle tests 120+ creatives across 12 products. At a 10% winner rate, that's 10-12 winning ad creatives identified — enough to build a profitable ad account.

Open the static ad generator and try it with your first product. Five free credits, no card.

FAQ

How many ad creatives should I test per product?

Minimum 10, ideally 15. At a 10% winner rate, testing 10 creatives gives you a 65% chance of finding a winner. Testing 15 pushes that to 79%. Testing 3 — which is what most people do — gives you only 27%.

When should I kill an underperforming ad?

Kill at 48 hours if CTR is below 0.5% and you have zero add-to-carts after $15+ spend. Kill at 72 hours if your CPA is more than 2x your break-even. Never kill based on less than $10 total spend.

How long before creative fatigue sets in?

For targeted e-commerce audiences, creative fatigue typically hits at 3-7 days. Watch for CTR drops as the first signal — that's your cue to rotate in fresh variations before CPM spikes and CPA follows.

Is it better to test static images or video ads?

Both convert, but static images allow faster iteration. You can produce and launch 10 static variations in minutes versus hours for video. For initial ad creative testing, static gives you more data points per day. Scale winners into video once you've identified the angles that work.

How do I know if it's the product or the ad that's failing?

Clone 10+ different ad layouts from proven winners and test them with your product. If none convert, the product is the problem — the ad layouts were already validated. If 1-2 convert, the product works and you've found your winning angle. Clone-and-test isolates the product variable by removing design quality as a factor.