GPT Image 2 for ads: an honest test of OpenAI's new model

OpenAI launched GPT Image 2 on April 21, 2026. Twelve hours later it took #1 on every Image Arena leaderboard with a +242 point margin over Nano Banana 2 — the biggest gap the leaderboard has recorded. Cue the launch-day takes.

The follow-up nobody answered: is GPT Image 2 for ads actually better, or is the benchmark winner just better at benchmarks?

We ran it through the workflow that matters for someone shipping ads this week. Meta static, Instagram 4:5, 9:16 Story, multi-aspect-ratio export. We tracked where it shipped, where it stalled, and where it just refused.

What actually changed in GPT Image 2

GPT Image 2 is a generational jump. The Image Arena swing — +242 points on text-to-image, four times the largest jump anyone had recorded — is the headline. The rest of the spec sheet holds up too.

Native reasoning before generation. GPT Image 2 is the first image model with O-series reasoning baked in. Before it draws, it interprets the prompt, plans the composition, and self-checks the output. Reasoning effort is exposed as a parameter — off, low, medium, high. More reasoning means more accurate output and a longer wait.

Resolution and aspect ratios that match real ad surfaces. Up to 2K standard, 4K in beta. Aspect ratios from 3:1 ultra-wide to 1:3 ultra-tall. Real native resolution, not upscaled.

Up to 8 connected images per prompt with character and object continuity. Plus, Pro, and Business subscribers get this in Thinking mode. Variant testers should pay attention here. Generate 8 versions of the same product on 8 different backdrops, in one call, with the bottle preserved across all of them.

Text rendering. ~99% accuracy on Latin scripts, 95%+ on Japanese, Korean, Chinese, Hindi, and Bengali. TechCrunch's contrast — a 2024 DALL-E 3 Mexican menu with "enchuita," "churiros," "burrto," "margartas" against an Images 2.0 menu that reads cleanly — is the demo every ad creative will notice.

Reference-guided editing loop with partial regeneration. Upload an image, ask for "make the background warmer" or "move the can two inches to the left," and the model modifies the relevant region instead of regenerating the entire frame. Cheaper than full regen. Edits stay coherent across turns.

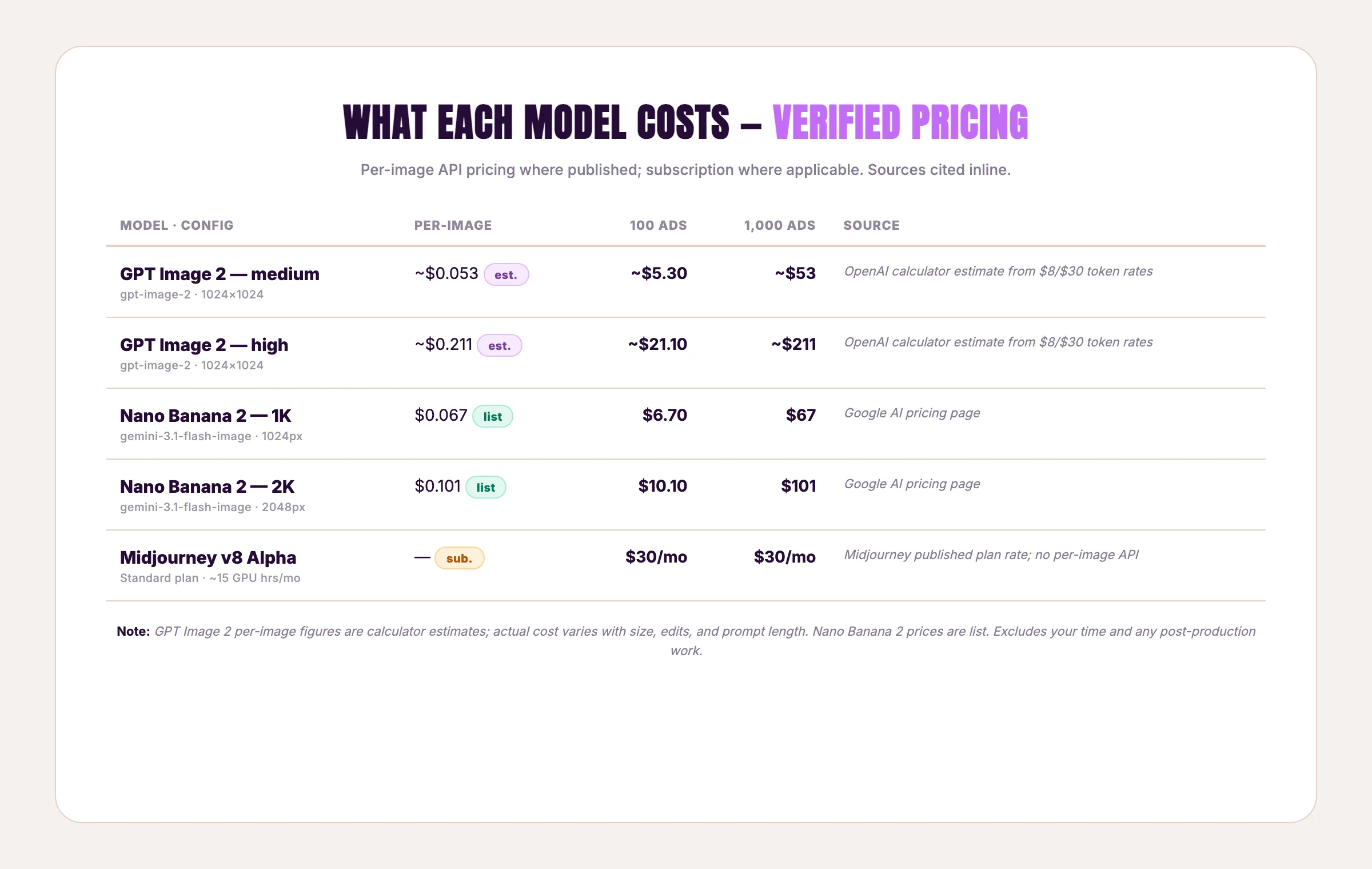

Pricing. Token-based on the API: $8 per million image input tokens, $30 per million image output tokens, per OpenAI's pricing page. Translated through OpenAI's calculator, that's roughly $0.006 (low quality), $0.053 (medium), and $0.211 (high) per 1024×1024 image — figures cited consistently across third-party guides like Build Fast With AI's developer breakdown. Actual cost depends on size, edit operations, and prompt length.

Pollo AI's two-week test called it "absolutely worth it for professional creators and marketers who prioritize precision over artistic chaos." MindStudio said it "tends to win on commercial and product realism." Both right — for text-led ads. For everything else, the picture is messier.

That's the rest of this post.

How to use GPT Image 2 for ads

Three things GPT Image 2 does better than any other model for ad creation:

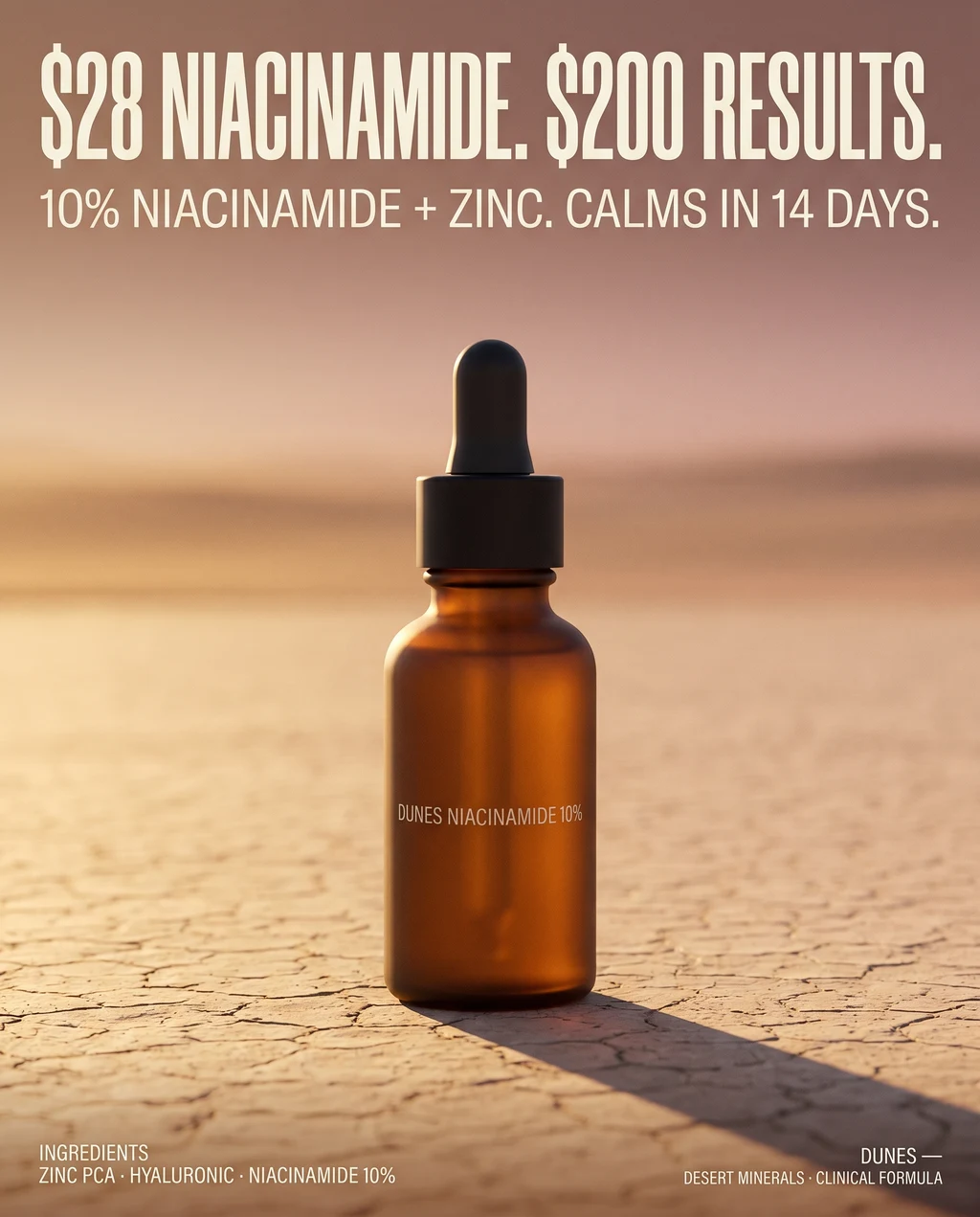

1. CTAs, price callouts, and headline typography. If you bought GPT Image 2 for one feature, this is it. "$28 niacinamide. $200 results." used to garble — letterforms collapse, the dollar sign drifts, the period vanishes. GPT Image 2 holds the typography. Multi-line bodies stay legible. Disclosures and small badge text survive at 2K. The text-rendering tail still exists — we cover the failure modes in the teardown section — but the floor is much higher than any model that came before it.

2. Multi-panel ad sets with one product across N backdrops. Set n: 4 to n: 8 under Thinking mode and ask for the same product in 8 different scenarios. Character and object continuity holds. The bottle's label stays the same bottle's label. Variant testing — same product, different lifestyle backdrops — collapses a day of prompt engineering into one call.

3. Compliance-heavy claims. Supplement labels, financial disclosures, "results may vary" footnotes. Anything where a single mis-rendered character is a regulatory problem. GPT Image 2 is the first model that renders small text reliably enough to ship.

A GPT Image 2 prompt structure for ads

Midjourney prompt syntax doesn't apply here. GPT Image 2 wants prose, not parameter strings. Give it:

- Subject + product anchor. What is the hero, and what does it look like in physical detail. "30ml frosted amber glass dropper bottle, matte black cap." Not "a beautiful skincare bottle."

- Composition and lighting. "Centered, golden-hour sunlight from the right, single sharp shadow." The reasoning step uses this to plan layout before drawing.

- Brand palette in prose. No HEX field — describe colors in language. "Cream off-white text on a warm ochre to dusty pink gradient." Reasonably accurate, not perfect.

- Typography directives, with exact text in quotes. "Bold condensed sans-serif headline reading '$28 NIACINAMIDE. $200 RESULTS.'" Quote the literal copy. The model treats it as a string, not as a vibe.

- Reasoning level. Use medium for production iteration. Use high only for hero shots you'll actually ship. Low is faster but breaks on spatial logic and photorealism.

API parameters that matter for ads

Four parameters change your output:

| Parameter | What to set for ads |

|---|---|

model | gpt-image-2 |

quality | high for static feed ads, medium for variant testing, never low |

size | 1024×1024 (Meta square), 1024×1792 (9:16 / Story), 1792×1024 (16:9 / YouTube) |

n | 4–8 for variant testing under Thinking mode |

output_format | webp for cheaper storage and platform-friendly compression |

The hidden cost is the resolution choice. 1024×1024 high quality is $0.211 per image. The 9:16 portrait sizes cost less per pixel but more per generation than gpt-image-1, while landscape sizes are cheaper. Run the math against your most-shipped aspect ratio first.

The 5-step ChatGPT Plus workflow (no API needed)

If you don't touch the API, here's the workflow inside ChatGPT that gets you the same outputs the API parameters above describe:

- Open ChatGPT. Free tier or Plus. GPT Image 2 is available in both, with Thinking mode capped on free.

- Paste your prompt as prose. Anchor: subject + product detail + composition + brand palette in language + exact text in quotes. Example structure is in the prompt at the bottom of this post.

- Specify aspect ratio explicitly in the prompt. "Generate at 4:5 portrait, 1024×1280" — ChatGPT honors this even though there's no dropdown.

- Iterate via reply. Don't re-prompt from scratch. Reply with the change you want ("make the bottle 30% larger, lower into frame"). ChatGPT triggers partial regeneration automatically — the same workflow API users access via the edit endpoint.

- Download in webp if available, png otherwise. ChatGPT exports at the native size GPT Image 2 generated; you'll resize for platform specs in your editor of choice.

The catch ChatGPT Plus doesn't tell you: each new aspect ratio is a fresh generation, not a free resize. Same compounding cost as the API path, just hidden inside the $20/mo subscription rate limits.

Worked example — same brief, two models, head to head

We built a fictional minimalist skincare brand called Dunes — desert-inspired, niacinamide serum, premium DTC positioning — and ran the exact same prompt through GPT Image 2 at high reasoning and Nano Banana 2 (Gemini 3.1 Flash Image) at HIGH thinking with web grounding. Same words. Same brief. One generation each. The full prompt is at the bottom of this post if you want to replicate the test.

GPT Image 2 (run via ChatGPT):

Headline rendered correctly. "NIACINAMIDE" — the long compound word that tripped earlier image models — held shape at the headline scale. Secondary line legible. The brand mark "DUNES NIACINAMIDE 10%" on the front of the bottle survived at the smaller scale. Composition reads as a graphic ad poster — typography-led, product as supporting element, sharp typographic hierarchy.

Nano Banana 2 (run via Gemini API at HIGH thinking, 4:5, 2K):

Same correctly-spelled headline. Same legible subhead. Same legible bottle label. Composition reads as a product photograph with overlaid copy — bottle dominant in the lower frame, atmospheric haze in the background, shallow depth-of-field, headline anchored above. Generation time measured at the API: ~35 seconds.

What the comparison shows.

Both models nailed the text on the first prompt. The launch-day narrative — "GPT Image 2's text rendering is the moat" — wasn't wrong on the leaderboard, but for this specific brief both models produced correctly-rendered headlines, subheads, and product labels on the first try. The 242-point Arena gap didn't matter once both outputs cleared the legibility bar.

Composition was the differentiator. GPT Image 2 read the brief as a graphic ad poster — typography first, product second. Nano Banana 2 read the same brief as a product photograph with overlaid copy — product first, typography anchored above. Both interpretations are defensible. Which one ships depends on the campaign: a sales-callout creative wants GPT Image 2's typographic hierarchy, a premium DTC product ad probably wants Nano Banana 2's photographic dominance.

The rest of the differences track the Reddit-flagged texture trade-off: Nano Banana 2's photographic depth-of-field and surface texture on the cracked clay held up better. GPT Image 2's flatter rendition was sharper on small typography but less cinematic.

This is the only ad-creation review on the SERP that ran the same prompt through both winners.

Methodology note: GPT Image 2 was generated through ChatGPT (subscription path, no per-image charge); Nano Banana 2 was generated through the Gemini API at 4:5, 2K, HIGH thinking (35 seconds measured). API list pricing for both models is in the cost-comparison table further down — those are list prices for 1024×1024 standard configurations, not per-call costs for our specific generations.

Create your own AI product ads

Create your adWhere it breaks for ad creation

The benchmark sweep is real. Shipping an ad by Tuesday is harder than the leaderboard suggests.

Speed at high reasoning is the visible cost. A widely-shared Reddit thread flagged the upper bound: "the self-review loop is interesting but 11 minutes per image is rough for any real workflow." That's the high-effort tail when the model is researching, planning, and self-checking. Medium reasoning is meaningfully faster, but if you're testing 30 variants in a day, the difference compounds.

Stricter copyright guardrails than gpt-image-1. Brand logos, character likenesses, anything that looks like another company's IP get refused more often. Useful for compliance. Painful when you're trying to prototype a partnership ad or test a parody concept.

Nature, organic textures, and deep depth-of-field still favor Nano Banana 2. A popular r/OpenAI thread made the case directly: "GPT Image 2 is amazing for a lot of things, but for nature it is not." For lifestyle ads where the product sits in a forest, on a beach, or in a soft-focus garden, Nano Banana 2 holds the texture better. We covered the same trade-off pattern in our comparison of static vs video ads.

Knowledge cutoff December 2025. Web search only kicks in at thinking mode. If your ad references current product packaging, the latest cultural moment, or anything that shipped after January, you have to either turn on thinking (more latency) or describe the reference in detail yourself.

When to use GPT Image 2 vs other models

The honest framework, by use case:

| You're making | Best model | Why |

|---|---|---|

| Text-heavy mockup with CTAs and price callouts | GPT Image 2 at medium reasoning | Leads Image Arena's text-to-image category by 242 points. Multi-line typography holds at 2K. |

| Multi-panel set with one product, one prompt | GPT Image 2 Thinking mode (n: 8) | OpenAI documents up to 8 connected images per prompt with character continuity. |

| Lifestyle ads with deep depth-of-field, organic texture | Nano Banana 2 | The Reddit-flagged texture trade-off lined up in our worked example — Nano Banana 2 produced the more cinematic photographic composition on the same prompt. |

The decision tree most marketers default to — "what's the highest-rated model on the leaderboard?" — is the wrong one for ads. The leaderboard is rated on aesthetic preference. Ads are rated on whether the CTA is legible, the product is on-brand, and the file is shipped in the dimensions your ad platform accepts.

A finished ad campaign is rarely one image. It's the same concept rendered across square, vertical, horizontal, story, banner, and Pinterest pin. Every raw model — including GPT Image 2 — makes you re-prompt for each ratio. One concept × every platform = a stack of separate generations.

That's the workflow gap. It's not a model problem. It's a workflow one.

For evaluating raw image generators on their own merits, our seven AI image models compared for ad creation walks through each on ad-specific criteria.

When not to use GPT Image 2 (or any AI model) for an ad

Honest beat. If you're shipping a campaign where:

- The product packaging or label needs to be pixel-accurate to your real SKU (regulated supplements, food with FDA disclosures, hardware with exact specs)

- The brand color match has to be HEX-precise for an enterprise style-guide audit

- You're targeting a highly visual category (luxury fashion, fine jewelry, premium watches) where photography quality is the brand

— hire a photographer or a designer. AI image models are a tool, not a replacement. They're best for testing volume and proving demand on creative before committing the production budget.

What does GPT Image 2 cost compared to other models

Per-image, at the quality tier you'd actually ship:

What the per-image price hides:

- Resize-via-regenerate cost. Both GPT Image 2 and Nano Banana 2 charge per generation, per dimension. Midjourney's monthly subscription absorbs this. Factor your aspect-ratio mix when comparing.

- First-prompt success rate. Two outputs for the same brief, both correctly rendered text on the first try (see the worked example above). For text-led ads, the cost-per-usable-ad is what matters more than the cost-per-attempt.

For shops shipping AI-generated ads at volume — testing what wins, iterating fast, throwing away what doesn't — the per-image price is rarely the bottleneck. The compounding cost is the time between "I have an idea" and "I have a file the platform accepts." That's the gap our breakdown of Instagram static product ad examples and our ad creative testing guide both circle back to.

FAQ

Is GPT Image 2 better than Nano Banana 2 for ads?

For ads with rendered text — CTAs, prices, disclosures, multi-line headlines — yes. GPT Image 2 leads on Image Arena's text-to-image category by 242 points and ships ~99% Latin script accuracy. For lifestyle ads with organic textures, soft depth-of-field, and natural environments, Nano Banana 2 still wins on texture. For workflow speed and HEX color discipline, Nano Banana 2 has the edge. Pick by use case, not by leaderboard.

How much does GPT Image 2 cost per ad?

OpenAI publishes per-token pricing: $8 per million image input tokens, $30 per million image output tokens. The OpenAI pricing calculator translates that to roughly $0.006 (low quality), $0.053 (medium), and $0.211 (high) per 1024×1024 image, as documented in third-party guides. Actual cost moves with size, edit operations, and prompt length, so treat per-image figures as estimates rather than fixed list prices.

Can GPT Image 2 use my actual product photo?

Not as a structured input. You can upload an image and ask for edits — GPT Image 2 will modify regions of the uploaded image with partial regeneration. What you cannot do is say "use this exact bottle from this photo and place it in a new scene." For real-product accuracy across new scenes, Flux Kontext and Nano Banana Pro's multimodal ingest are still ahead. GPT Image 2 rebuilds the product from your prose description every time.

Can ChatGPT generate ads for free?

Yes, partially. GPT Image 2 rolled out to free-tier ChatGPT on April 21, 2026 with reasoning capped — fine for one mockup, slow for variant testing. Thinking mode, multi-image generation, and web search are gated to Plus, Pro, and Business subscribers.

How long does it take to generate a finished ad with GPT Image 2?

Generation time scales with the reasoning level. At high reasoning a Reddit user reported the upper bound at 11 minutes per image when self-review iterates aggressively. For Nano Banana 2 we measured a single 4:5 generation at HIGH thinking + 2K coming back in 35 seconds via the Gemini API. Multiply either by every dimension your campaign needs.

The benchmark winner is real. The workflow is the rest.

GPT Image 2 is the most accurate, most reasoning-capable image model anyone has shipped to a public API. For text-heavy ad mockups, multi-panel variant tests, and compliance-heavy creative, it's the tool worth opening tonight.

For shipping ten finished ads in five aspect ratios by Tuesday morning, that's a different problem. The model is one layer of the stack. The workflow — reference + product + brand + every dimension your platform needs — is the other one. Pick the layer that's bottlenecking you, not the one loudest in the news cycle.

If the model is your bottleneck, our comparison of seven AI image models for ad creation is where to start. If the workflow is, clone an ad with AdDogs, pick a reference from the library, and ship the finished version in seconds. Start with five free credits. No card.