Nano Banana 2 for ads: an honest production test

On February 26, 2026, Google quietly swapped the default image model inside Google Ads. Anyone running Performance Max creative that day got new outputs — different lighting, different composition logic, different small-text rendering — without changing a single setting. The release post landed on the blog three hours later. By then, every Asset Studio user was already shipping ads on the new model.

The new default is Nano Banana 2 — formal name Gemini 3.1 Flash Image Preview, model ID gemini-3.1-flash-image-preview. Source: Google's launch announcement.

We noticed because we run on the same model. Every ad generated on AdDogs flows through Nano Banana 2 via the Vertex AI REST API. At 2K resolution it bills $0.101 per generation per the official Gemini API pricing page — a line item we pay every time someone clicks "generate."

This post is what we found in production: which capabilities are real, which are quietly broken, and which the consumer-app version doesn't expose. Most reviews describe the model. We describe the operating cost.

Three models named Nano Banana

Nano Banana 2 is Google's image generation model — formal name Gemini 3.1 Flash Image Preview, model ID gemini-3.1-flash-image-preview. It runs on Vertex AI and the Gemini API and powers image generation in the Gemini consumer app.

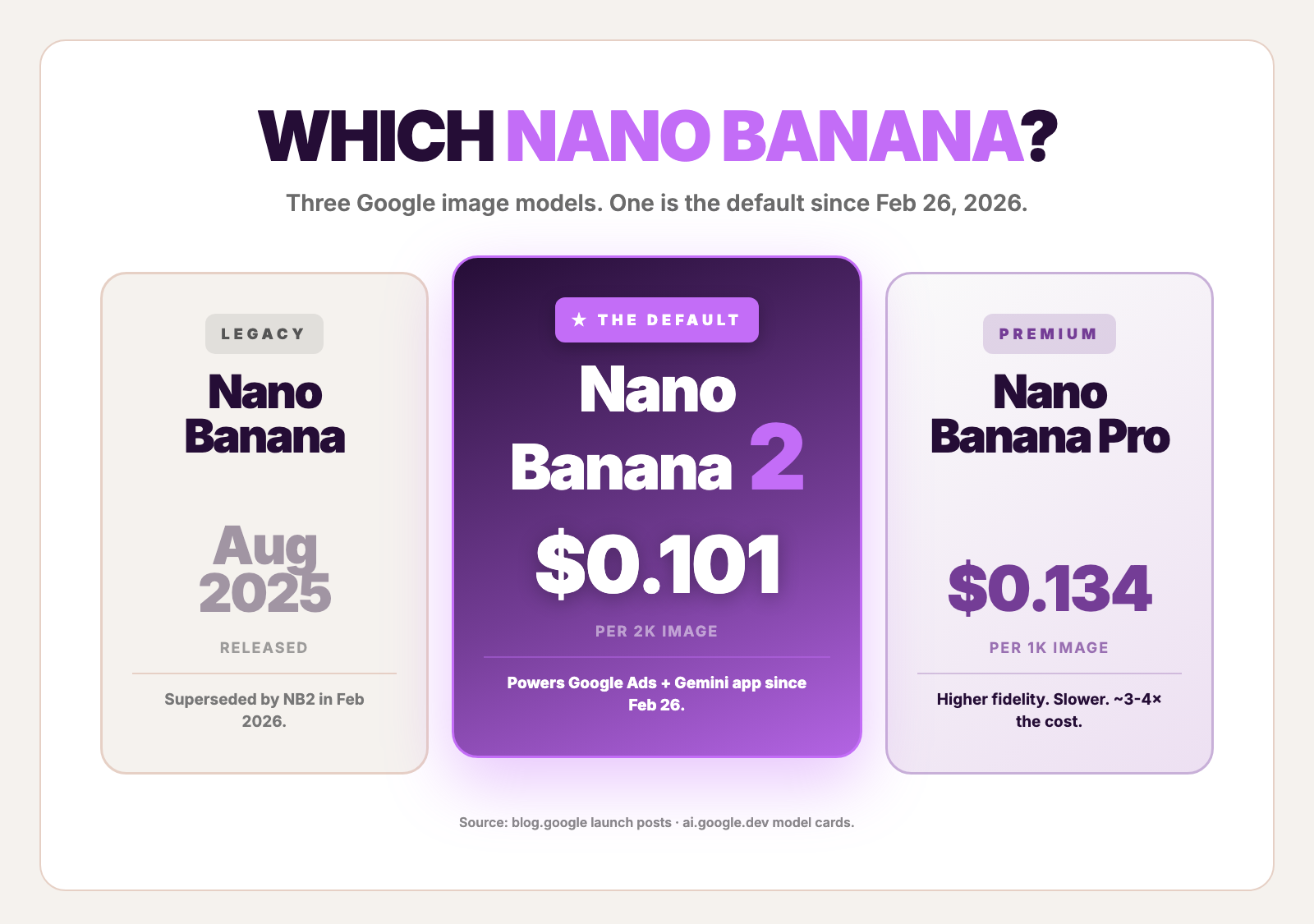

Naming gets confusing fast. Google ships three image models under "Nano Banana" branding. They are not the same:

| Model | Real name | API ID | Released | Tier | Status as of May 2026 |

|---|---|---|---|---|---|

| Nano Banana (original) | Gemini 2.5 Flash Image | gemini-2.5-flash-image | Aug 26, 2025 | Flash | Legacy. Superseded. |

| Nano Banana Pro | Gemini 3 Pro Image | gemini-3-pro-image-preview | Nov 20, 2025 | Pro | Live. Higher fidelity. ~3-4× the cost. |

| Nano Banana 2 | Gemini 3.1 Flash Image Preview | gemini-3.1-flash-image-preview | Feb 26, 2026 | Flash | The default in Google Ads. The Gemini consumer app's image engine. The one most "Nano Banana" articles are quietly about. |

The shortest disambiguation: if it's free in the Gemini app, it's running on Nano Banana 2. If it costs $0.134 per 1K image on the API, it's Nano Banana Pro. If you're hearing about a model that "changed Google Ads," the change was the swap from Pro to 2 on February 26.

Sources for the table: the Nano Banana Pro launch post, the Feb 2026 Nano Banana 2 launch, and the official model documentation.

This post is about the Flash one — the one running underneath every ad generated on Google's surfaces since February 26.

What changed when Nano Banana 2 became the default

The swap from Nano Banana Pro to Nano Banana 2 inside Google Ads wasn't a downgrade — Pro is still the higher-fidelity ceiling. It was a deliberate cost-and-speed tradeoff baked into a new model that closed most of the quality gap at half the price.

Concrete deltas, drawn from the official model docs:

Native 4K, up from 1K on the original Nano Banana. Standard tier supports 1K out of the box. 2K and 4K are paid resolutions. 4K at native generation lands around 16 megapixels — billboard-grade output from a Flash-tier model.

14 reference images per request. Ten objects, four characters, all composed in one call. Nano Banana Pro accepts six and five; the Flash tier ships the bigger object slot. For ads — where a typical composition is one hero product, one brand mark, one or two contextual references — the new default fits the brief in a single API call rather than chaining three.

14 native aspect ratios. 1:1, 3:2, 2:3, 3:4, 4:3, 4:5, 5:4, 9:16, 16:9, 21:9, plus four ultra-wides at 1:4, 4:1, 1:8, 8:1. The same 14 ratios AdDogs supports natively on Pro and Ultimate plans. The overlap isn't accidental — both Google and us calibrated to actual ad surfaces, not arbitrary numbers.

Thinking mode, on by default. Three levels — minimal, standard, high — exposed via thinkingConfig.thinkingLevel. The model reasons about layout before drawing. Cannot be fully disabled. The previous-generation Flash had no reasoning step at all.

Grounding tools, native. googleSearch with webSearch and imageSearch baked into the API. With grounding enabled, the model researches current product packaging, real-world references, and visual trends before generation. This is the largest single capability the Gemini consumer app does not expose to users — it's API-only.

Batch tier, ~50% off. Underused. The batch endpoint accepts the same prompts and returns within a 24-hour window at half the standard list price. For shops generating overnight test runs across a creative library, batch cuts the API bill nearly in half with no quality difference.

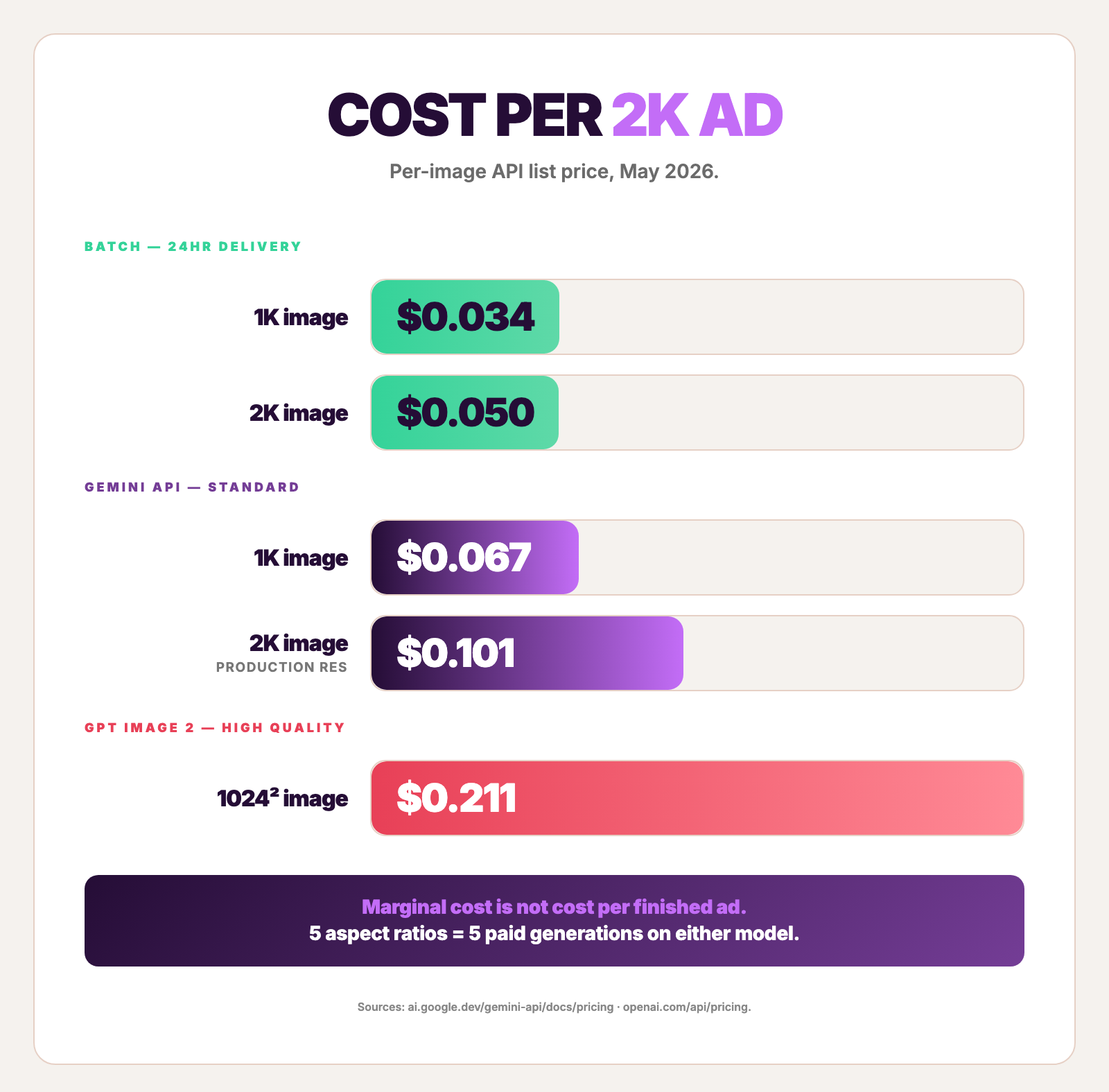

Pricing, verified May 2026 against the Gemini API pricing page:

| Resolution | Standard $/img | Batch $/img |

|---|---|---|

| 0.5K (512px) | $0.045 | $0.022 |

| 1K (default) | $0.067 | $0.034 |

| 2K | $0.101 | $0.050 |

| 4K | $0.151 | $0.076 |

Vertex AI matches. The Gemini consumer app is free with rate limits — fine for one mockup, useless for production volume.

The trick almost no review describes

The model's distinctive capability for ads isn't text rendering or thinking mode. It's the multi-image composition. Pass a product photo, a reference ad, and a logo as separate inputs in a single API call, and the model composes them. No edit chain. No second-pass inpainting. One call, one finished output.

Almost every review that compares the two recent flagships misses this because most writers feed the model one prompt and one optional reference. Production ad pipelines — including ours — feed it three or four image inputs per call as the standard pattern.

The relevant chunk of an actual API request body looks like this:

Three parts of that request body shape the output more than the prompt text does:

thinkingConfig.thinkingLevel: "HIGH" triggers the reasoning step before generation. We run HIGH for compositions where layout matters. We drop to standard for batch runs where predictability beats fidelity. Minimal exists; we never use it for ads.

tools[0].googleSearch enables grounding. With both webSearch and imageSearch declared, the model fetches real visual references before composing. The output looks current rather than generic. For ad creative — which has to feel like 2026, not 2023 — this is the difference between something we ship and something we throw away.

generationConfig.imageConfig.aspectRatio and imageSize together pick from the 14 native ratios at the resolution we want. No prompt-level ratio specification needed. The model honors the config field cleanly.

The Gemini consumer app exposes none of these three. Free-tier output corresponds roughly to thinking-standard with no grounding and a default aspect ratio. For brand-grade or hero output, the API path is the only route.

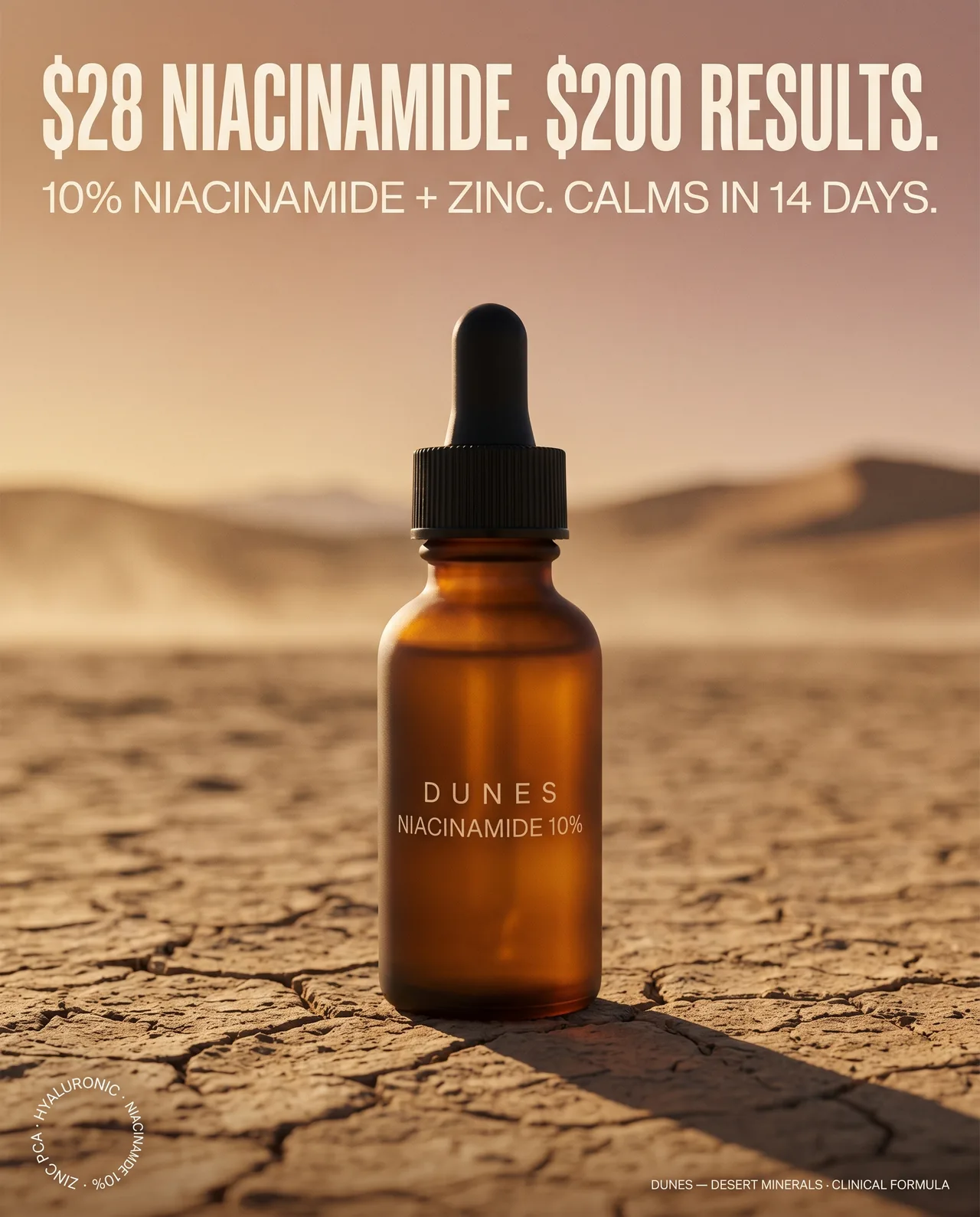

Worked-example prompt for the prose-and-typography side — same DUNES skincare brief used in our GPT Image 2 head-to-head test, runnable as-is on the Gemini API or by pasting into the Gemini app:

A single 4:5 + HIGH thinking + 2K generation comes back in roughly 35 seconds via the Gemini API — measured in our GPT Image 2 head-to-head test. The model treats quoted strings as literal text to render and the HEX codes as color anchors for the gradient. Both headlines held on first prompt in this run; bottom-right brand mark and bottom-left ingredients badge both rendered legibly. The composition reads as a product photograph with overlaid copy — exactly the photographic-led tendency the brief flagged.

Six things we noticed running Nano Banana 2 in production

A field report from production runs since February 26, 2026. Some of these are real strengths. Some are real failures. We code for both.

1. Multi-image composition collapsed our edit chain. Before NB2, our pipeline ran a generation pass plus an inpainting pass to land a logo cleanly on a generated scene. NB2 takes the logo as a third image input and composes it in the same call. One generation per finished ad, not two.

2. The 16-IP hard block, December 2025 onward. Post a Disney cease-and-desist on the consumer Gemini app, 16 major intellectual properties were hard-blocked. Disney characters, Marvel, several others. The error reads "The image was filtered because it may contain copyrighted characters or content." The block extends past the named IPs — anything that resembles them, even loosely, gets refused. For ad shops cloning ads with recognizable character likenesses or logos, it's a hard ceiling.

3. Trademark refusals come through safetyRatings, not as errors. Pasting a third-party brand logo into a composition triggers a block. The Vertex API doesn't surface a friendly error — it returns an empty image array, and the policy reason is buried in the safetyRatings field that production code has to inspect explicitly. We log every hit. Community confirmation in the Medium "Nano Banana Content Blocked" thread catalogs the pattern.

4. Iterative-edit degradation, the April 2026 community signal. After three or four conversational edits to the same image, quality compounds-down — faces age visibly, skin textures plasticize, colors drift. The signal reached community fever pitch in April 2026, with hundreds of forum posts on the Google AI Developers community and Reddit. The workaround: don't chain four edits. Generate fresh after the second.

5. Safety-filter false positives on apparel. HARM_CATEGORY_SEXUALLY_EXPLICIT triggers on legitimate fashion and apparel photography surprisingly often. The error: "The image was filtered out because it violated Google's Generative AI Prohibited Use policy." DTC clothing brands hit this most. We pre-screen prompts that include silhouette-related words.

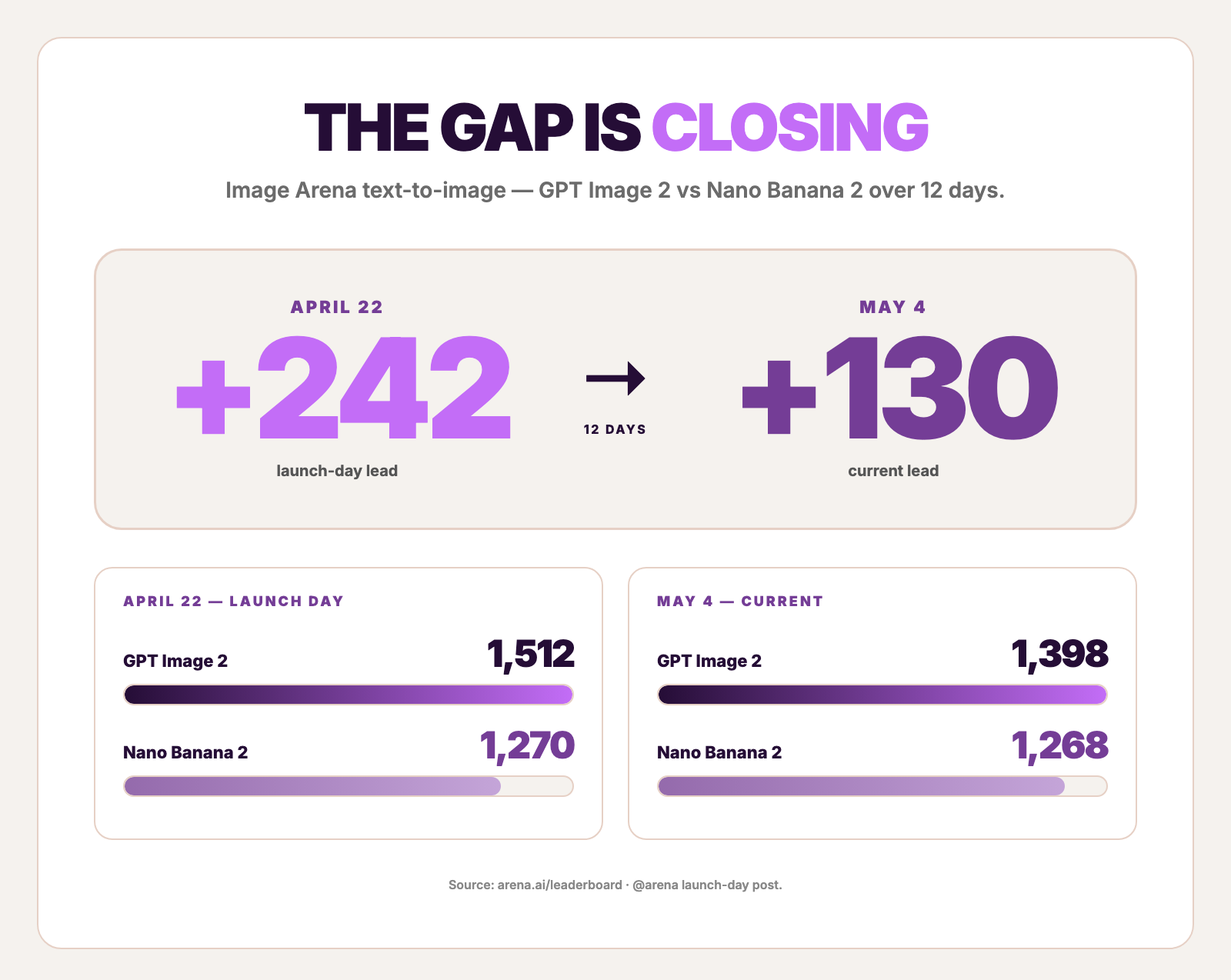

6. Small body text on photographic backgrounds is the most common reason to re-generate. Headlines and CTAs at 16-point and above land cleanly. Sub-12-point body copy on a busy background — disclosures, ingredient lists, tiny brand marks — drops legibility. This is also where the Image Arena leaderboard gap shows up: the model sits at roughly 1,268 on text-to-image vs GPT Image 2 medium at 1,398 as of May 4, 2026. The score gap predicts the rendering gap on small text exactly.

Two more failure modes that hit at production volume:

Silent refusals look like silence. Any production system has to inspect safetyRatings on every response. The default Vertex behavior on a content-policy refusal is to return without the image — no exception, no error code at the HTTP layer. Reddit r/Bard runs dozens of "why did the model just not respond?" threads that are exactly this.

Preview-tier rate limits are real. The model is in Preview status. Google's docs explicitly warn of "more restrictive rate limits." Tier 2 quota upgrades are the path for production volume — surfaced in community posts on the Google AI Developers forum as the standard production setup.

Create your own AI product ads

Create your adWhat the leaderboard says, and why it's incomplete

Image Arena puts GPT Image 2 ahead on text-to-image — 1,398 vs 1,268 as of May 4, 2026 per arena.ai/leaderboard. On launch day, April 22, the gap was +242 (1,512 vs 1,270 — the largest gap recorded that day, per @arena). The gap compressed by roughly 110 points over the following two weeks as votes accumulated past launch hype.

The score gap is real. It does not translate to a quality gap on every ad brief. Three reasons it predicts less than reviews assume:

The Arena rating is preference-weighted across all image generation tasks. Most votes are aesthetic — landscapes, characters, conceptual art. The text-to-image vertical is weighted, but the input pool is broader than ad creative.

Both models clear the legibility bar on headlines and CTAs at 16 points and above. The gap shows up on smaller body copy, where the lower-scoring model produces more re-generation cycles. For a hero-line-only ad creative, the gap is invisible.

Multi-image composition is not measured by Image Arena. Pass a product photo, a reference ad, and a logo to both models and ask each to compose them in one call. The Flash model does it natively in 14-image mode. GPT Image 2 requires an edit chain. The leaderboard score doesn't surface this difference at all.

The benchmark winner is the right answer for some briefs and the wrong answer for others. The cost math sometimes flips the decision. The next section runs that math.

Nano Banana 2 vs GPT Image 2, by spec

| Metric | Nano Banana 2 | GPT Image 2 |

|---|---|---|

| Image Arena text-to-image — Apr 22, 2026 | 1,270 | 1,512 (+242) |

| Image Arena text-to-image — May 4, 2026 | 1,268 | 1,398 (+130) |

| Native resolution | 0.5K, 1K, 2K, 4K | 1024², 2K beta, 4K beta |

| Aspect ratios | 14 native (incl. 1:4, 4:1, 1:8, 8:1) | 3 native; others via re-prompt |

| Thinking mode | HIGH / standard / minimal — on by default | high / medium / low / off — off by default |

| Grounding (Google Search) | Yes, native | No |

| Multi-image input | 14 references in one call | 8 connected images, edit-only |

| Pricing per 1024² (high quality) | $0.067 (1K) / $0.101 (2K) | ~$0.211 |

| Batch pricing | Yes, ~50% off | No |

| Best for | Multi-image composition, high volume, native 4K | Text-led ads, multi-panel sets with character continuity |

Sources: arena.ai/leaderboard, Gemini API pricing, OpenAI pricing. NB2 wins on cost, batch, native multi-image composition, and aspect-ratio variety. GPT Image 2 wins on text-rendering benchmark scores and connected-image continuity. The decision is workflow-dependent. For the head-to-head worked example on the same DUNES skincare brief, see our GPT Image 2 for ads test — both models nailed the headline; composition preference differed.

What it costs at 30, 100, and 1,000 ads

Per-image API cost is the easy part. Cost per finished ad — across every aspect ratio you ship — is where the math gets honest:

| Setup | Per finished 2K-grade ad | 30 ads/mo | 100 ads/mo | 1,000 ads/mo |

|---|---|---|---|---|

| Gemini consumer app (free, manual workflow) | $0 marginal | ~15-30 hrs of labor | ~50-100 hrs | not viable |

| Gemini API (2K, standard) | $0.101 + your time | $3.03 + 15-25 min/ad | $10.10 + 25-40 hrs | $101 + 250-400 hrs |

| Gemini API (2K, batch, 24-hr delivery) | $0.050 | $1.50 | $5.00 | $50 + ~250 hrs |

| Vertex AI (2K, standard, with SLA) | $0.101 | matches API | matches API | matches API |

| GPT Image 2 (high, 1024²) | ~$0.211 | $6.33 + 15-25 min/ad | $21.10 + 25-40 hrs | $211 + 250-400 hrs |

Two costs the per-image price hides:

Resize-via-regenerate. Both Gemini and OpenAI charge per generation, per dimension. Generating a Meta square plus Instagram 4:5 plus 9:16 Story plus 16:9 YouTube plus a Pinterest pin from one concept is five paid generations on either model. Our breakdown of ad sizes and specs for every platform lists the dimensions a complete campaign needs. Subscription-only paths absorb this only inside their own rate limits. Factor your aspect-ratio mix when comparing.

First-prompt success rate. Marginal cost of an attempt is not cost per usable ad. For text-led ads where small body copy matters, the higher re-generation rate vs GPT Image 2 narrows the gap. For multi-image-composition ads where the Flash model needs one call vs GPT Image 2's edit chain, the gap widens in the Flash model's favor.

For shops shipping at volume — testing what wins, iterating fast, throwing away what doesn't — the per-image fee is rarely the bottleneck. The compounding cost is the time between concept and a finished ad in every dimension the platform accepts. That's the workflow gap our breakdown of Instagram static product ad examples and ad creative testing guide both circle. Our AdDogs vs AdCreative.ai pricing comparison covers the workflow-cost gap in depth. AdDogs runs Nano Banana 2 underneath the workflow at the $0.101-per-generation floor, while the user-facing cost stays at $0.33 per finished ad in any of the 14 dimensions.

The legal corner most ad shops miss

Per Google's Vertex AI generative AI terms and the Gemini API additional terms, the user owns the output and can use it commercially. Google Cloud also ships a generative AI indemnification clause on paid Vertex AI services. So far so standard.

Two caveats most ad shops never read:

Trademark claims are explicitly excluded from indemnification. Allegations "based on a trademark-related right as a result of Customer's use of Generated Output in trade or commerce" are not covered by the indemnity. Cloning a known brand's logo or wordmark into your ad does not carry indemnification. The trademark side of ad-cloning legality more broadly is the topic of our is it legal to clone a competitor's ad post.

Indemnification covers GA services. As of May 2026, Nano Banana 2 (gemini-3.1-flash-image-preview) is in Preview status, not generally available. Verify the model is on the indemnified services list before relying on the indemnity for commercially-sensitive work. The lawyer answer is to read the list. The practical answer is to assume preview-tier coverage is uncertain until Google moves NB2 to GA.

SynthID watermarking is embedded on outputs — invisible to humans, detectable algorithmically. C2PA credentials are also embedded. No visible watermark on standard output, which matters for any ad where the watermark would compromise the visual.

FAQ

Is Nano Banana 2 better than GPT Image 2 for ads?

Use case determines the answer. Image Arena puts GPT Image 2 ahead on text-to-image at 1,398 vs Nano Banana 2 at 1,268 as of May 2026. For text-led ads with sub-12-point body copy, GPT Image 2 has the edge. For multi-image composition (product + reference + logo in one API call), native 4K, ultra-wide aspect ratios, and batch overnight runs at half the cost, Nano Banana 2 wins. Both render headlines correctly on first prompt — the gap closes at the 1,200+ Elo level for any text at 16 points and above.

How much does Nano Banana 2 cost per ad?

$0.045 (0.5K) to $0.151 (4K) per image at standard tier per the Gemini API pricing page. 2K — the resolution most ad platforms accept without compression artifacts — is $0.101 standard or $0.050 in batch. Vertex AI matches. The Gemini consumer app is free with rate limits but caps thinking-HIGH and grounding. API list price is not the cost per finished ad — factor every aspect ratio you ship as a separate generation.

Can Nano Banana 2 use my actual product photo?

Yes, natively. Pass the product photo as one of up to 14 reference images in a single API call. The model composes it with the rest of the prompt — no edit chain, no inpainting pass. With grounding enabled, the model also fetches current packaging trends to keep the rendered scene contemporary. This is the strongest Nano Banana 2 capability for ads and the one most reviews skip because most writers feed the model one prompt and one optional reference.

What's the difference between Nano Banana 2 and Nano Banana Pro?

Different tiers, different prices, different launch dates. Nano Banana Pro (gemini-3-pro-image-preview, launched November 20, 2025) is the Pro-tier model — higher fidelity ceiling, slower, ~3-4× the cost ($0.134 per 1K, $0.240 per 4K). Nano Banana 2 (gemini-3.1-flash-image-preview, launched February 26, 2026) is the Flash-tier model — faster, cheaper, native 14-image composition. NB Pro accepts six objects plus five characters; NB2 accepts ten plus four. NB2 became the default in Google Ads on the day it launched.

How long does it take to generate an ad with Nano Banana 2?

A single 4:5 + HIGH thinking + 2K generation came back in roughly 35 seconds via the Gemini API in our head-to-head test against GPT Image 2. Multi-image composites with grounding enabled can run longer. Complex prompts at thinking-HIGH approach our 120-second timeout ceiling. Drop to thinking-standard for predictable batch latency at the cost of some fidelity.

Default model swapped, workflow didn't

Google swapping its default image model on February 26 changed every Performance Max output generated that day. It did not change the production cost of shipping an ad in five aspect ratios by Tuesday. Nano Banana 2 is a real upgrade — multi-image composition in one call, native 4K, 14 aspect ratios, thinking and grounding enabled. It's also the model with the 16-IP hard block, the iterative-edit degradation, the trademark-excluded indemnification, and the silent-refusal payload pattern.

The upgrade and the failure modes ship in the same release. We pick it again because the cost-per-2K-ad is the lowest in the field and the multi-image input collapses our composition step to one API call. We've coded for every failure mode in this post.

The model is the floor. The workflow — reference + product + brand + every aspect ratio your campaign needs — is everything that comes after. For evaluating raw image generators on their own merits, our seven AI image models compared for ad creation is the broader reference. Pick a reference ad from the 14,000+ ad examples library. Drop in your product photo. AdDogs runs the rest of the stack on top of Nano Banana 2 in seconds. Five free credits, no card.